This advanced example shows how to process text data with recipes and use them in a predictive model. It also has an example of extracting information from each model fit for later use.

The data are from Amazon:

“This dataset consists of reviews of fine foods from amazon. The data span a period of more than 10 years, including all ~500,000 reviews up to October 2012. Reviews include product and user information, ratings, and a plaintext review.”

A small subset of the data are contained here; we sampled a single review from 5,000 random products and 80% of these data were used as the training set. The remaining 1,000 were used as the test set.

There is a column for the product, a column for the text of the review, and a factor column for a class variable. The outcome is whether the reviewer game the product a five-star rating or not.

library(tidymodels) data("small_fine_foods") training_data #> # A tibble: 4,000 x 3 #> product review score #> <chr> <chr> <fct> #> 1 B000J0LSBG "this stuff is not stuffing its not good at all save yo… other #> 2 B000EYLDYE "I absolutely LOVE this dried fruit. LOVE IT. Whenever I … great #> 3 B0026LIO9A "GREAT DEAL, CONVENIENT TOO. Much cheaper than WalMart and… great #> 4 B00473P8SK "Great flavor, we go through a ton of this sauce! I discove… great #> 5 B001SAWTNM "This is excellent salsa/hot sauce, but you can get it for … great #> 6 B000FAG90U "Again, this is the best dogfood out there. One suggestion… great #> 7 B006BXTCEK "The box I received was filled with teas, hot chocolates, a… other #> 8 B002GWH5OY "This is delicious coffee which compares favorably with muc… great #> 9 B003R0MFYY "Don't let these little tiny cans fool you. They pack a lo… great #> 10 B001EO5ZXI "One of the nicest, smoothest cup of chai I've made. Nice m… great #> # … with 3,990 more rows

The idea is to process the text data into features and use these features to predict whether the review was five-star or not.

Recipe and Model Specifications

The data processing steps are:

create an initial set of features based on simple word/character scores, such as the number of words, URLs and so on; The

textfeatureswill be used for thisthe text is tokenized (i.e. broken into smaller components such as words)

stop words (such as “the”, “an”, etc.) are removed

tokens are stemmed to a common root where possible

tokens are converted to dummy variables via a signed, binary hash function

non-token features are optionally transformed to a more symmetric state using a Yeo-Johnson transformation

predictors with a single distinct value are removed

all predictors are centered and scaled.

Some of these steps may or may not be good ideas (such as stemming). In this process, the main tuning parameter will be the number of feature hash features to use.

A recipe will be used to implement this. We’ll also need some helper objects. For example, for the Yeo-Johnson transformation, we need to know the initial feature set:

library(textfeatures) basics <- names(textfeatures:::count_functions) head(basics) #> [1] "n_words" "n_uq_words" "n_charS" "n_uq_charS" "n_digits" #> [6] "n_hashtags"

Also, the implementation of feature hashes does not produce binary values. This small function will help convert the scores to values of -1, 0, or 1:

The recipe is:

library(textrecipes) pre_proc <- recipe(score ~ product + review, data = training_data) %>% # Do not use the product ID as a predictor update_role(product, new_role = "id") %>% # Make a copy of the raw text step_mutate(review_raw = review) %>% # Compute the initial features. This removes the `review_raw` column step_textfeature(review_raw) %>% # Make the feature names shorter step_rename_at( starts_with("textfeature_"), fn = ~ gsub("textfeature_review_raw_", "", .) ) %>% step_tokenize(review) %>% step_stopwords(review) %>% step_stem(review) %>% # Here is where the tuning parameter is declared step_texthash(review, signed = TRUE, num_terms = tune()) %>% # Simplify these names step_rename_at(starts_with("review_hash"), fn = ~ gsub("review_", "", .)) %>% # Convert the features from counts to values of -1, 0, or 1 step_mutate_at(starts_with("hash"), fn = binary_hash) %>% # Transform the initial feature set step_YeoJohnson(one_of(!!basics)) %>% step_zv(all_predictors()) %>% step_normalize(all_predictors())

Note that, when objects from the global environment are used, they are injected into the step objects via !!. For some parallel processing technologies, these objects may not be found by the worker processes.

To model these data, a regularized logistic regression model will be used:

lr_mod <- logistic_reg(penalty = tune(), mixture = tune()) %>% set_engine("glmnet")

Three tuning parameters should be trained for this data analysis.

Resampling

There are enough data here such that 10-fold resampling would hold out 400 reviews at a time to estimate performance. Performance estimates using this many observations have sufficiently low noise to measure and tune models.

Grid Search

A regular grid is used. For glmnet models, evaluating penalty values is fairly cheap due to the use of the “submodel-trick”. The grid will use 20 penalty values, 5 mixture values, and 3 values for the number of hash features.

five_star_grid <- expand.grid( penalty = 10^seq(-3, 0, length = 20), mixture = seq(0, 1, length = 5), num_terms = 2^c(8, 10, 12) )

Note that, for each resample, the text processing recipe is only prepped 6 times. This increases the computational efficiency of the analysis by avoiding redundant work.

For illustration, we will save information on the number of predictors by penalty value for each glmnet model. This might help use understand how many features were used across the penalty values. An extraction function is used to do this:

glmnet_vars <- function(x) { # `x` will be a workflow object mod <- extract_model(x) # `df` is the number of model terms for each penalty value tibble(penalty = mod$lambda, num_vars = mod$df) } ctrl <- control_grid(extract = glmnet_vars, verbose = TRUE)

Finally, let’s run the grid search:

roc_scores <- metric_set(roc_auc) set.seed(1559) five_star_glmnet <- tune_grid(lr_mod, pre_proc, resamples = folds, grid = five_star_grid, metrics = roc_scores, control = ctrl) #> Loading required package: Matrix #> #> Attaching package: 'Matrix' #> The following objects are masked from 'package:tidyr': #> #> expand, pack, unpack #> Loaded glmnet 4.0-2 five_star_glmnet #> # Tuning results #> # 10-fold cross-validation #> # A tibble: 10 x 5 #> splits id .metrics .notes .extracts #> <list> <chr> <list> <list> <list> #> 1 <split [3.6K/400]> Fold01 <tibble [300 × 7… <tibble [0 × 1… <tibble [300 × 5… #> 2 <split [3.6K/400]> Fold02 <tibble [300 × 7… <tibble [0 × 1… <tibble [300 × 5… #> 3 <split [3.6K/400]> Fold03 <tibble [300 × 7… <tibble [0 × 1… <tibble [300 × 5… #> 4 <split [3.6K/400]> Fold04 <tibble [300 × 7… <tibble [0 × 1… <tibble [300 × 5… #> 5 <split [3.6K/400]> Fold05 <tibble [300 × 7… <tibble [0 × 1… <tibble [300 × 5… #> 6 <split [3.6K/400]> Fold06 <tibble [300 × 7… <tibble [0 × 1… <tibble [300 × 5… #> 7 <split [3.6K/400]> Fold07 <tibble [300 × 7… <tibble [0 × 1… <tibble [300 × 5… #> 8 <split [3.6K/400]> Fold08 <tibble [300 × 7… <tibble [0 × 1… <tibble [300 × 5… #> 9 <split [3.6K/400]> Fold09 <tibble [300 × 7… <tibble [0 × 1… <tibble [300 × 5… #> 10 <split [3.6K/400]> Fold10 <tibble [300 × 7… <tibble [0 × 1… <tibble [300 × 5…

This took a while to complete. What did the results look like? Let’s get the resampling estimates of the area under the ROC curve for each tuning parameter:

grid_roc <- collect_metrics(five_star_glmnet) %>% arrange(desc(mean)) grid_roc #> # A tibble: 300 x 9 #> penalty mixture num_terms .metric .estimator mean n std_err .config #> <dbl> <dbl> <dbl> <chr> <chr> <dbl> <int> <dbl> <chr> #> 1 0.0379 0.25 4096 roc_auc binary 0.816 10 0.00753 Preprocesso… #> 2 0.0183 0.5 4096 roc_auc binary 0.814 10 0.00776 Preprocesso… #> 3 0.0127 0.75 4096 roc_auc binary 0.814 10 0.00781 Preprocesso… #> 4 0.00886 1 4096 roc_auc binary 0.813 10 0.00794 Preprocesso… #> 5 0.0264 0.25 4096 roc_auc binary 0.813 10 0.00790 Preprocesso… #> 6 0.0546 0.25 4096 roc_auc binary 0.811 10 0.00805 Preprocesso… #> 7 0.0127 0.5 4096 roc_auc binary 0.811 10 0.00810 Preprocesso… #> 8 0.00886 0.75 4096 roc_auc binary 0.810 10 0.00803 Preprocesso… #> 9 0.0264 0.5 4096 roc_auc binary 0.810 10 0.00812 Preprocesso… #> 10 0.0127 1 4096 roc_auc binary 0.810 10 0.00808 Preprocesso… #> # … with 290 more rows

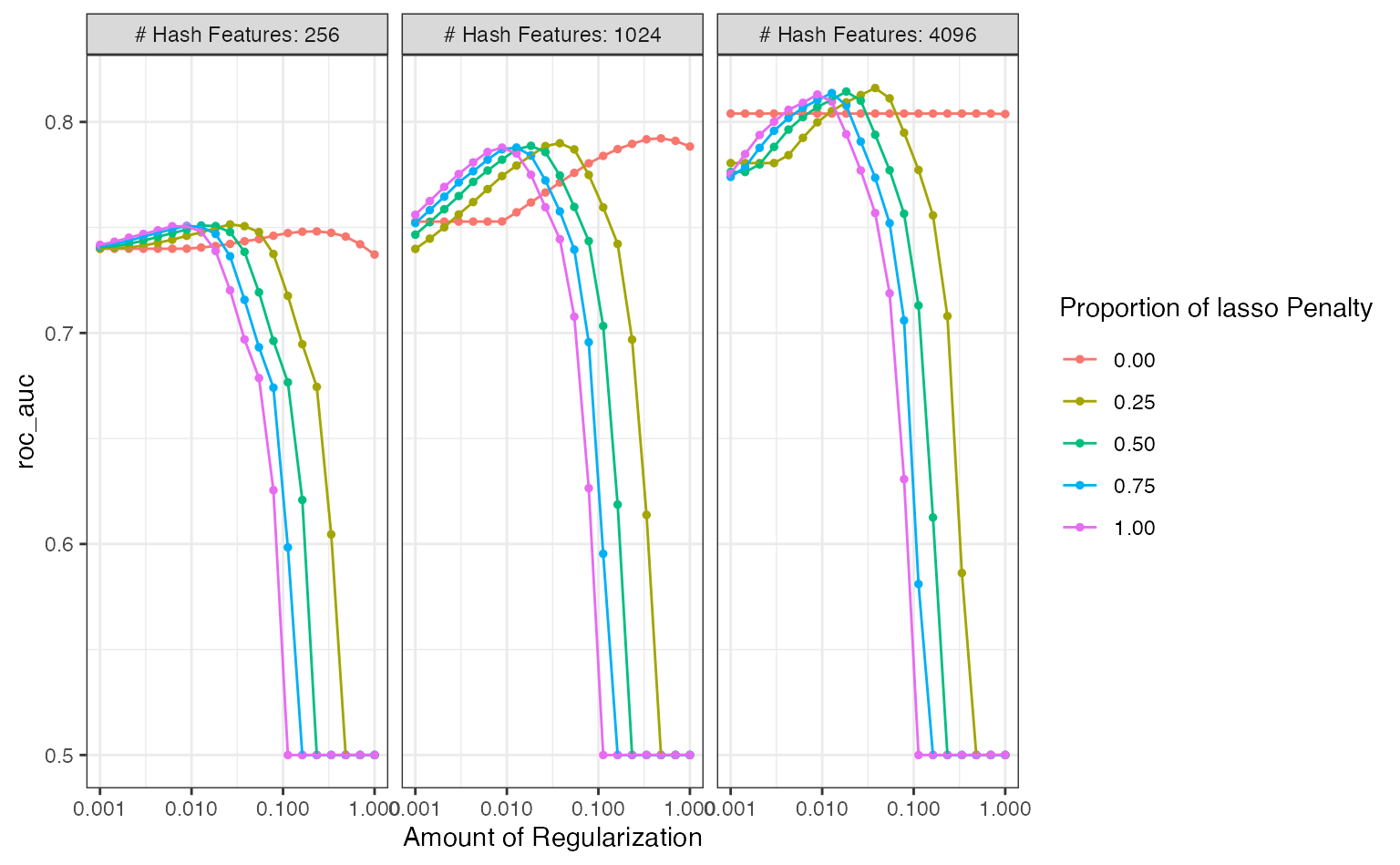

The best results had a fairly high penalty value and focused on the ridge penalty (i.e. no feature selection via the lasso’s L1 penalty). The best solutions also used the largest number of hashing features.

What was the relationship between performance and the tuning parameters?

autoplot(five_star_glmnet, metric = "roc_auc")

There is definitely an effect due to the number of features used1. The profiles with mixture values greater than zero had steep drop-offs in performance. What’s that about? Those are cases where the lasso penalty is removing too many (and perhaps all) features from the model2.

The panel with at least 4096 features shows that there are several parameter combinations that have roughly equivalent performance. A case could be made to choose a larger mixture value and less of a penalty to select a more simplistic model that contains fewer predictors. If more experimentation were conducted, a largest set of features should also be considered.

We’ll come back to the extracted glmnet components at the end of this example.

Directed Search

What if we had started with Bayesian optimization? Would a good set of conditions have been found more efficiently?

Let’s pretend that we haven’t seen the grid search results. We’ll initialize the Gaussian process model with five tuning parameter combinations chosen with a space-filling design.

It might be good to use a custom dials object for the number of hash terms. The default object, num_terms(), uses a linear range and tries to set the upper bound of the parameter using the data. Instead, let’s create a parameter set, change the scale to be log2, and define the same range as was used in grid search.

To use this, we have to merge the recipe and parsnip model object into a workflow:

library(workflows) five_star_wflow <- workflow() %>% add_recipe(pre_proc) %>% add_model(lr_mod)

Then we can extract and manipulate the corresponding parameter set:

five_star_set <- five_star_wflow %>% parameters() %>% update( num_terms = hash_range, penalty = penalty(c(-3, 0)), mixture = mixture(c(0.05, 1.00)) )

This is passed to the search function via the param_info argument.

Finally, the initial rounds of search can be biased more towards exploration of the parameter space (as opposed to staying near the current best results). If expected improvement is used as the acquisition function, the trade-off value can be slowly moved from exploration to exploitation over iterations3. tune has a built-in function called expo_decay() that can help accomplish this:

trade_off_decay <- function(iter) { expo_decay(iter, start_val = .01, limit_val = 0, slope = 1/4) }

Using these values, let’s run the search:

set.seed(12) five_star_search <- tune_bayes( five_star_wflow, resamples = folds, param_info = five_star_set, initial = 5, iter = 30, metrics = roc_scores, objective = exp_improve(trade_off_decay), control = control_bayes(verbose = TRUE) ) #> #> ❯ Generating a set of 5 initial parameter results #> ✓ Initialization complete #> #> Optimizing roc_auc using the expected improvement with variable trade-off #> values. #> #> ── Iteration 1 ───────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.7559 (@iter 0) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.01 #> i penalty=0.00251, mixture=0.0528, num_terms=260 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.7475 (+/-0.0102) #> #> ── Iteration 2 ───────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.7559 (@iter 0) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.007788 #> i penalty=0.00389, mixture=0.616, num_terms=489 #> i Estimating performance #> ✓ Estimating performance #> ♥ Newest results: roc_auc=0.7753 (+/-0.009) #> #> ── Iteration 3 ───────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.7753 (@iter 2) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.006065 #> i penalty=0.00101, mixture=0.463, num_terms=517 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.7456 (+/-0.0107) #> #> ── Iteration 4 ───────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.7753 (@iter 2) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.004724 #> i penalty=0.00112, mixture=0.807, num_terms=4072 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.7703 (+/-0.00716) #> #> ── Iteration 5 ───────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.7753 (@iter 2) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.003679 #> i penalty=0.00343, mixture=0.523, num_terms=4038 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.7751 (+/-0.00694) #> #> ── Iteration 6 ───────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.7753 (@iter 2) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.002865 #> i penalty=0.00306, mixture=0.989, num_terms=1251 #> i Estimating performance #> ✓ Estimating performance #> ♥ Newest results: roc_auc=0.7859 (+/-0.00933) #> #> ── Iteration 7 ───────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.7859 (@iter 6) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.002231 #> i penalty=0.0174, mixture=0.991, num_terms=2349 #> i Estimating performance #> ✓ Estimating performance #> ♥ Newest results: roc_auc=0.7981 (+/-0.0078) #> #> ── Iteration 8 ───────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.7981 (@iter 7) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.001738 #> i penalty=0.011, mixture=0.999, num_terms=1832 #> i Estimating performance #> ✓ Estimating performance #> ♥ Newest results: roc_auc=0.8022 (+/-0.00809) #> #> ── Iteration 9 ───────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8022 (@iter 8) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.001353 #> i penalty=0.00632, mixture=0.943, num_terms=2806 #> i Estimating performance #> ✓ Estimating performance #> ♥ Newest results: roc_auc=0.8035 (+/-0.0047) #> #> ── Iteration 10 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8035 (@iter 9) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.001054 #> i penalty=0.00701, mixture=0.969, num_terms=3717 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.8034 (+/-0.0061) #> #> ── Iteration 11 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8035 (@iter 9) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.0008208 #> i penalty=0.00554, mixture=0.99, num_terms=3414 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.8028 (+/-0.00628) #> #> ── Iteration 12 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8035 (@iter 9) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.0006393 #> i penalty=0.0137, mixture=0.987, num_terms=3233 #> i Estimating performance #> ✓ Estimating performance #> ♥ Newest results: roc_auc=0.8162 (+/-0.00823) #> #> ── Iteration 13 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8162 (@iter 12) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.0004979 #> i penalty=0.0136, mixture=0.967, num_terms=290 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.7445 (+/-0.00702) #> #> ── Iteration 14 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8162 (@iter 12) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.0003877 #> i penalty=0.0143, mixture=0.921, num_terms=4085 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.814 (+/-0.00775) #> #> ── Iteration 15 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8162 (@iter 12) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.000302 #> i penalty=0.013, mixture=0.973, num_terms=3974 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.8099 (+/-0.00471) #> #> ── Iteration 16 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8162 (@iter 12) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.0002352 #> i penalty=0.0148, mixture=0.0565, num_terms=3023 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.7757 (+/-0.00876) #> #> ── Iteration 17 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8162 (@iter 12) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.0001832 #> i penalty=0.0139, mixture=0.992, num_terms=3427 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.8021 (+/-0.00829) #> #> ── Iteration 18 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8162 (@iter 12) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.0001426 #> i penalty=0.152, mixture=0.0898, num_terms=3286 #> i Estimating performance #> ✓ Estimating performance #> ♥ Newest results: roc_auc=0.8206 (+/-0.00922) #> #> ── Iteration 19 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8206 (@iter 18) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 0.0001111 #> i penalty=0.0978, mixture=0.121, num_terms=3755 #> i Estimating performance #> ✓ Estimating performance #> ♥ Newest results: roc_auc=0.8206 (+/-0.00836) #> #> ── Iteration 20 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8206 (@iter 19) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 8.652e-05 #> i penalty=0.575, mixture=0.156, num_terms=366 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.5 #> #> ── Iteration 21 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8206 (@iter 19) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 6.738e-05 #> i penalty=0.0705, mixture=0.108, num_terms=2883 #> i Estimating performance #> ✓ Estimating performance #> ♥ Newest results: roc_auc=0.8314 (+/-0.00731) #> #> ── Iteration 22 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8314 (@iter 21) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 5.248e-05 #> i penalty=0.00145, mixture=0.654, num_terms=2857 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.7585 (+/-0.0102) #> #> ── Iteration 23 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8314 (@iter 21) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 4.087e-05 #> i penalty=0.0633, mixture=0.26, num_terms=297 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.7461 (+/-0.00837) #> #> ── Iteration 24 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8314 (@iter 21) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 3.183e-05 #> i penalty=0.00393, mixture=0.421, num_terms=257 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.7489 (+/-0.00842) #> #> ── Iteration 25 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8314 (@iter 21) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 2.479e-05 #> i penalty=0.0129, mixture=0.16, num_terms=2730 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.7876 (+/-0.00503) #> #> ── Iteration 26 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8314 (@iter 21) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 1.93e-05 #> i penalty=0.0659, mixture=0.0836, num_terms=3179 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.8094 (+/-0.00417) #> #> ── Iteration 27 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8314 (@iter 21) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 1.503e-05 #> i penalty=0.181, mixture=0.13, num_terms=2383 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.7755 (+/-0.0098) #> #> ── Iteration 28 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8314 (@iter 21) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 1.171e-05 #> i penalty=0.257, mixture=0.622, num_terms=3937 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.5 #> #> ── Iteration 29 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8314 (@iter 21) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 9.119e-06 #> i penalty=0.0122, mixture=0.103, num_terms=744 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.7777 (+/-0.00696) #> #> ── Iteration 30 ──────────────────────────────────────────────────────────────── #> #> i Current best: roc_auc=0.8314 (@iter 21) #> i Gaussian process model #> ✓ Gaussian process model #> i Generating 5000 candidates #> i Predicted candidates #> i Trade-off value: 7.102e-06 #> i penalty=0.00135, mixture=0.0737, num_terms=3215 #> i Estimating performance #> ✓ Estimating performance #> ⓧ Newest results: roc_auc=0.726 (+/-0.0066) five_star_search #> # Tuning results #> # 10-fold cross-validation #> # A tibble: 310 x 5 #> splits id .metrics .notes .iter #> <list> <chr> <list> <list> <int> #> 1 <split [3.6K/400]> Fold01 <tibble [5 × 7]> <tibble [0 × 1]> 0 #> 2 <split [3.6K/400]> Fold02 <tibble [5 × 7]> <tibble [0 × 1]> 0 #> 3 <split [3.6K/400]> Fold03 <tibble [5 × 7]> <tibble [0 × 1]> 0 #> 4 <split [3.6K/400]> Fold04 <tibble [5 × 7]> <tibble [0 × 1]> 0 #> 5 <split [3.6K/400]> Fold05 <tibble [5 × 7]> <tibble [0 × 1]> 0 #> 6 <split [3.6K/400]> Fold06 <tibble [5 × 7]> <tibble [0 × 1]> 0 #> 7 <split [3.6K/400]> Fold07 <tibble [5 × 7]> <tibble [0 × 1]> 0 #> 8 <split [3.6K/400]> Fold08 <tibble [5 × 7]> <tibble [0 × 1]> 0 #> 9 <split [3.6K/400]> Fold09 <tibble [5 × 7]> <tibble [0 × 1]> 0 #> 10 <split [3.6K/400]> Fold10 <tibble [5 × 7]> <tibble [0 × 1]> 0 #> # … with 300 more rows

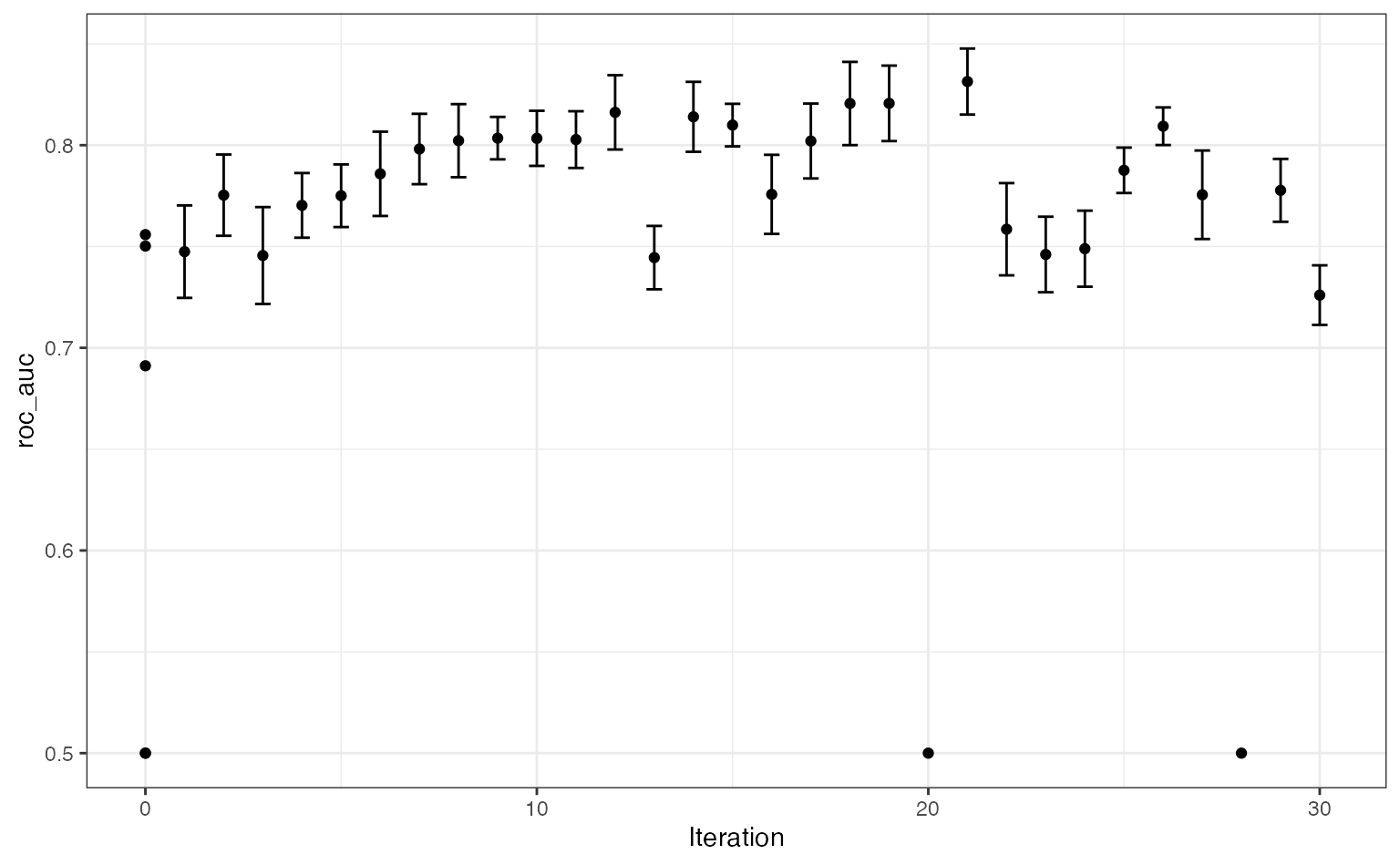

The results show some improvement over the initial set. One issue is that so many settings are sub-optimal (as shown in the figure above for grid search) so there are poor results periodically. There are regions where the penalty parameter becomes too large and all of the predictors are removed from the model. These regions are also dependent on the number of terms. There is a fairly narrow ridge4 where good performance can be achieved. Using more iterations would probably result in the search finding better results.

A plot of model performance versus the search iterations:

autoplot(five_star_search, type = "performance")

What would we do if we knew about the grid search results? In this case, we would restrict the range for the number of hash features to be larger (especially with more data). We might also restrict the penalty and mixture parameters to have a more restricted upper bound.

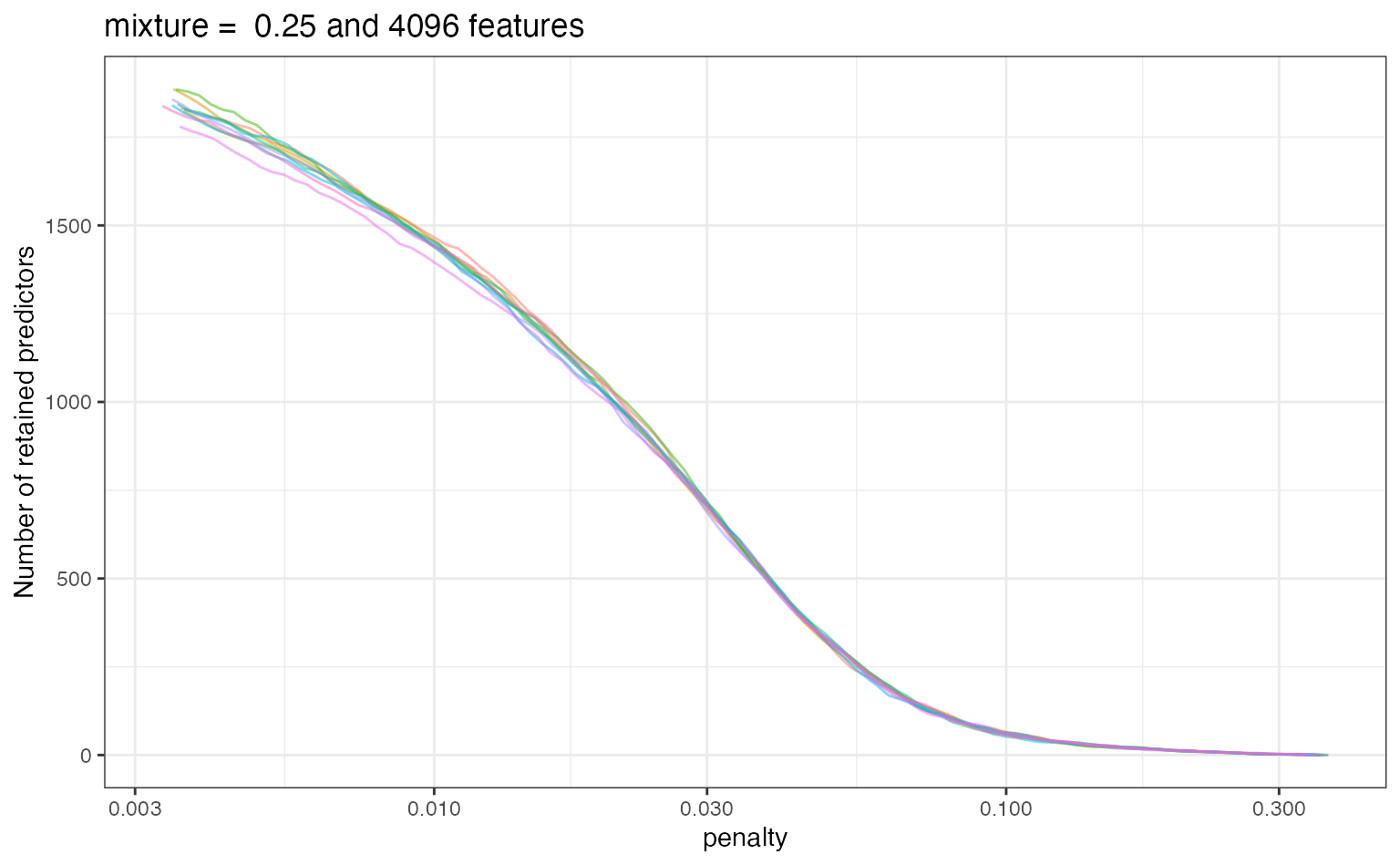

Extracted Results

Jumping back to the grid search results, let’s examine the results of our extract function. For each fitted model, a tibble was saved that has the relationship between the number of predictors and the penalty value. Let’s look at these results for the best model:

params <- select_best(five_star_glmnet, metric = "roc_auc") params #> # A tibble: 1 x 4 #> penalty mixture num_terms .config #> <dbl> <dbl> <dbl> <chr> #> 1 0.0379 0.25 4096 Preprocessor3_Model031

Recall that we saved the glmnet results in a tibble. The column five_star_glmnet$.extracts is a list of tibbles. As an example, the first element of the list is:

five_star_glmnet$.extracts[[1]] #> # A tibble: 300 x 5 #> num_terms penalty mixture .extracts .config #> <dbl> <dbl> <dbl> <list> <chr> #> 1 256 1 0 <tibble [100 × 2]> Preprocessor1_Model001 #> 2 256 1 0 <tibble [100 × 2]> Preprocessor1_Model002 #> 3 256 1 0 <tibble [100 × 2]> Preprocessor1_Model003 #> 4 256 1 0 <tibble [100 × 2]> Preprocessor1_Model004 #> 5 256 1 0 <tibble [100 × 2]> Preprocessor1_Model005 #> 6 256 1 0 <tibble [100 × 2]> Preprocessor1_Model006 #> 7 256 1 0 <tibble [100 × 2]> Preprocessor1_Model007 #> 8 256 1 0 <tibble [100 × 2]> Preprocessor1_Model008 #> 9 256 1 0 <tibble [100 × 2]> Preprocessor1_Model009 #> 10 256 1 0 <tibble [100 × 2]> Preprocessor1_Model010 #> # … with 290 more rows

More nested tibbles! Let’s unnest five_star_glmnet$.extracts:

library(tidyr) extracted <- five_star_glmnet %>% dplyr::select(id, .extracts) %>% unnest(cols = .extracts) extracted #> # A tibble: 3,000 x 6 #> id num_terms penalty mixture .extracts .config #> <chr> <dbl> <dbl> <dbl> <list> <chr> #> 1 Fold01 256 1 0 <tibble [100 × 2]> Preprocessor1_Model001 #> 2 Fold01 256 1 0 <tibble [100 × 2]> Preprocessor1_Model002 #> 3 Fold01 256 1 0 <tibble [100 × 2]> Preprocessor1_Model003 #> 4 Fold01 256 1 0 <tibble [100 × 2]> Preprocessor1_Model004 #> 5 Fold01 256 1 0 <tibble [100 × 2]> Preprocessor1_Model005 #> 6 Fold01 256 1 0 <tibble [100 × 2]> Preprocessor1_Model006 #> 7 Fold01 256 1 0 <tibble [100 × 2]> Preprocessor1_Model007 #> 8 Fold01 256 1 0 <tibble [100 × 2]> Preprocessor1_Model008 #> 9 Fold01 256 1 0 <tibble [100 × 2]> Preprocessor1_Model009 #> 10 Fold01 256 1 0 <tibble [100 × 2]> Preprocessor1_Model010 #> # … with 2,990 more rows

One thing to realize here is that tune_grid() may not fit all of the models that are evaluated. In this case, for each value of mixture and num_terms, the model is fit overall all penalty values5. To select the best parameter set, we can exclude the penalty column in extracted:

extracted <- extracted %>% dplyr::select(-penalty) %>% inner_join(params, by = c("num_terms", "mixture")) %>% # Now remove it from the final results dplyr::select(-penalty) extracted #> # A tibble: 200 x 6 #> id num_terms mixture .extracts .config.x .config.y #> <chr> <dbl> <dbl> <list> <chr> <chr> #> 1 Fold01 4096 0.25 <tibble [100 ×… Preprocessor3_Mod… Preprocessor3_Mo… #> 2 Fold01 4096 0.25 <tibble [100 ×… Preprocessor3_Mod… Preprocessor3_Mo… #> 3 Fold01 4096 0.25 <tibble [100 ×… Preprocessor3_Mod… Preprocessor3_Mo… #> 4 Fold01 4096 0.25 <tibble [100 ×… Preprocessor3_Mod… Preprocessor3_Mo… #> 5 Fold01 4096 0.25 <tibble [100 ×… Preprocessor3_Mod… Preprocessor3_Mo… #> 6 Fold01 4096 0.25 <tibble [100 ×… Preprocessor3_Mod… Preprocessor3_Mo… #> 7 Fold01 4096 0.25 <tibble [100 ×… Preprocessor3_Mod… Preprocessor3_Mo… #> 8 Fold01 4096 0.25 <tibble [100 ×… Preprocessor3_Mod… Preprocessor3_Mo… #> 9 Fold01 4096 0.25 <tibble [100 ×… Preprocessor3_Mod… Preprocessor3_Mo… #> 10 Fold01 4096 0.25 <tibble [100 ×… Preprocessor3_Mod… Preprocessor3_Mo… #> # … with 190 more rows

Now we can get at the results that we want using another unnest:

extracted <- extracted %>% unnest(col = .extracts) # <- these contain a `penalty` column extracted #> # A tibble: 20,000 x 7 #> id num_terms mixture penalty num_vars .config.x .config.y #> <chr> <dbl> <dbl> <dbl> <int> <chr> <chr> #> 1 Fold01 4096 0.25 0.360 0 Preprocessor3_Mo… Preprocessor3_Mo… #> 2 Fold01 4096 0.25 0.344 1 Preprocessor3_Mo… Preprocessor3_Mo… #> 3 Fold01 4096 0.25 0.328 2 Preprocessor3_Mo… Preprocessor3_Mo… #> 4 Fold01 4096 0.25 0.313 2 Preprocessor3_Mo… Preprocessor3_Mo… #> 5 Fold01 4096 0.25 0.299 3 Preprocessor3_Mo… Preprocessor3_Mo… #> 6 Fold01 4096 0.25 0.286 3 Preprocessor3_Mo… Preprocessor3_Mo… #> 7 Fold01 4096 0.25 0.273 4 Preprocessor3_Mo… Preprocessor3_Mo… #> 8 Fold01 4096 0.25 0.260 5 Preprocessor3_Mo… Preprocessor3_Mo… #> 9 Fold01 4096 0.25 0.248 7 Preprocessor3_Mo… Preprocessor3_Mo… #> 10 Fold01 4096 0.25 0.237 7 Preprocessor3_Mo… Preprocessor3_Mo… #> # … with 19,990 more rows

Let’s look at a plot of these results (per resample):

ggplot(extracted, aes(x = penalty, y = num_vars)) + geom_line(aes(group = id, col = id), alpha = .5) + ylab("Number of retained predictors") + scale_x_log10() + ggtitle(paste("mixture = ", params$mixture, "and", params$num_terms, "features")) + theme(legend.position = "none")

These results might help guide the range of the penalty value if more optimization was conducted.

This is a small sample of the overall data set. When more data are used, a larger feature set is optimal.↩︎

See the last section below for more details.↩︎

See the vignette on acquisition functions for more details.↩︎

Sorry, pun intended.↩︎

This is a feature of this particular model and is not generally true for other engines.↩︎